|

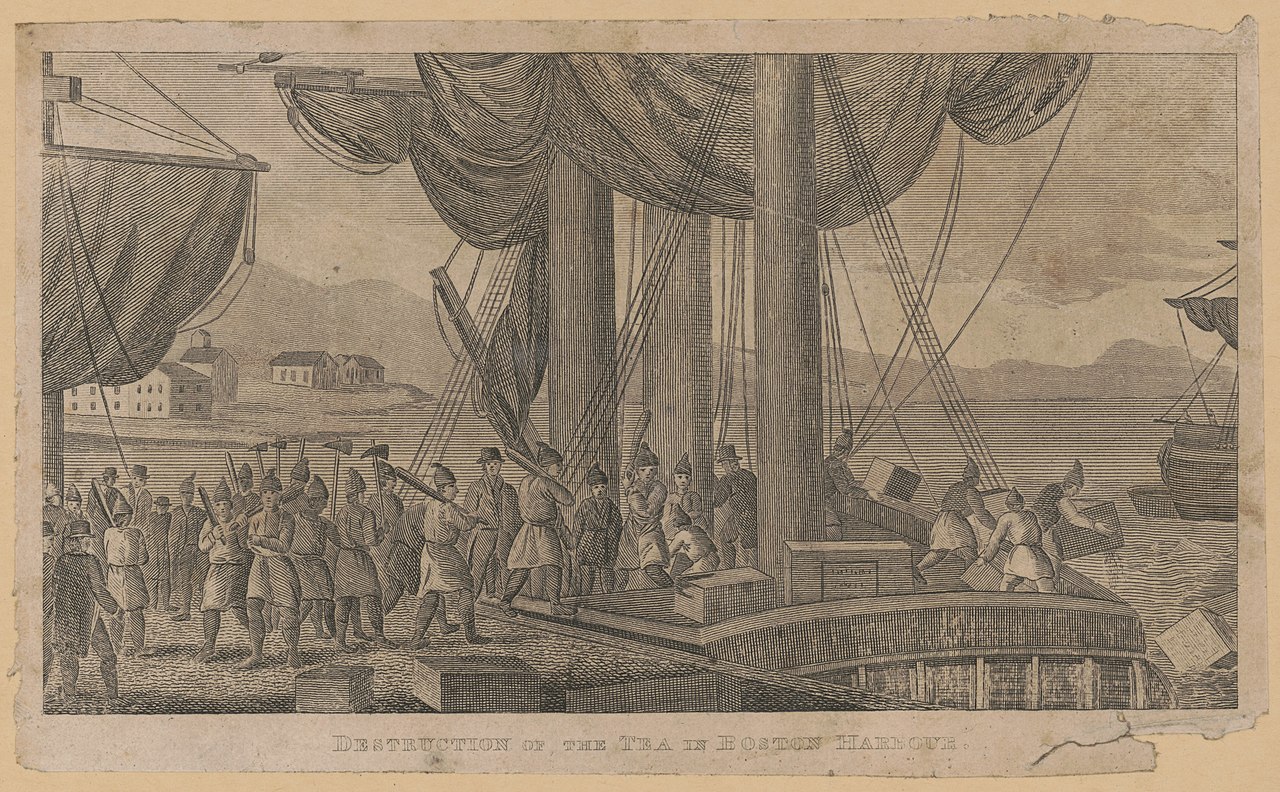

WORDS BY Anthony Ruggiero The American Revolution marked a turning point in the lives of colonists living in America who, after years of perceived mistreatment by the British, finally declared their independence. Although this separation from Britain was meant to benefit all those who advocated for it in the colonies, the results for American women offered no discernable improvement in their lives. Women were often criticised for their attempts at political participation. Additionally, women were forced to rely on their male counterparts for such things as landholding. Furthermore, although it could be argued that women used their spouses as vehicles to promote their political agenda, their reliance on their spouse was a representation of the gender barriers that existed during the time period. Women were also subjected to brutal treatment that was emblematic of their subordinate role in society. The Boston 'Tea Party' (Popular Graphic Arts [Public domain]) Throughout the events of the revolution and thereafter, women actively participated in promoting the agenda of revolutionists and unsuccessfully advocated for political recognition. For example, in 1775 Providence, when tea was being burned in opposition to Britain’s tea tax, women actively participated in the protest.[1] The Virginia Gazette article, Providence Women Burn Tea, recognized women’s participation within the protest, however it perpetuated a stereotype that women have an “evil tendency of continuing the habit of drinking tea.”[2] Additionally, the article also used a negative description of women to represent the burning of the tea as the “funeral of Madam Souchong.”[3] The article’s description perpetuates the contemporary societal view of women as ‘low’; as prostitutes.[4] Additionally, women’s attempted involvement in politics was also scrutinized. For example, Jane Adams advocated for the rights of women to be recognized in the new nation to her husband, congressman, John Adams, stating that women would cause a “rebellion” if their voices were not heard[5]. In response John Adams described her boldness as laughable, thus showing his disregard for her claims.[6] The Virginia Gazette discusses the British Taxation of the Colonies (Alexander Purdie [Public domain]) Although it can be argued that some women, as part of the social elite in society, were able to promote their political views by using their status, there reliance on their male counterpart also demonstrated their role remained as inferior. For example, a land sale by Anne Holden, a member of the Daughters of Liberty, to four men can be viewed as way to insert herself into the political framework, since women were unable to vote despite the size of their landholding[7]. Through giving her land to these four men, it can be argued that she sought to influence the men’s voting choice. However, this action also showed the reliance of women on the male gender to promote a female political agenda. Lastly, women during this period were subjected to brutal treatment. For example, Mary Philips described being raped by a British soldier who claimed she was secretly working with “rebels.”[8] Despite the promotion of female stereotypes and male oppression, many women continued to actively participate in promoting the agenda of revolutionists and advocated (unsuccessfully) for political recognition. For example, The Daughters of Liberty were a political group that surfaced in response to British taxation in the colonies during the American Revolution[9], in particular the Townshend Acts of 1767, which were a series of measures that imposed customs duties on imported British goods such as glass, paints, lead, paper and tea. According to Carol Berkin’s film, Women as Major Participants in the Revolutionary War, women took a political stance by burning tea, and instead of buying English cloth, they would create their own, which became known as "Liberty Cloth.”[10] Although a good majority of women were unable to leave their homes during the Revolution because they were expected to take care of their children, this time period resulted in what would be known as ‘republican motherhood’. This term applied to women who were primarily educated in order to help educate their children in the ways of ‘moral living’.[11] Women also played a pivotal role in influencing their children’s political views.[12] President Thomas Jefferson remarked, "I thought it essential to give [my daughters] a solid education, which might enable them, when they become mothers, to educate their own daughters, and even to direct the course for sons, should their fathers be lost, or incapable, or inattentive."[13] During the Revolution, women also began questioning their inferior status in relation to their husbands. Many women published poems citing their frustrations and their desires to be free. For example, one line from a poem read, “That woman, dear woman, shall ever be free. Nor more shall the wife, all as meek as a lamb.”[14] This time period spawned rhetoric of freedom from both Great Britain, as well as in society for women. The American Revolution was supposed to liberate all those colonists of European descent from oppression, but instead only highlighted the oppression women faced within early American society. Despite attempts to participate in politics, women such as Jane Adams, were still lambasted for their attempts. Furthermore, women were forced to rely on men within the upper classes of society in an attempt to promote their political views. While women attempted to succeed, societally and politically men benefitted.  Anthony Ruggiero is currently a High School History Teacher in New York City, New York. In addition to teaching, he has been published in several magazines, such as History Is Now, Historic-UK, Tudor Life, Discover Britain, and The Odd Historian. Anthony has also written for the Culture-Exchange blog, and The Freelance History Writer blog. His work can also be viewed on his Twitter handle: @Anthony10290122 [1] Providence Women Burn Tea, 1775. In Norton, Mary Beth. Major Problems in American Women’s History. Fifth Edition. Houghton Mifflin, 2014, 112. [2] Providence Women Burn Tea, 1775, 112. [3] Ibid, 113. [4] Ibid, 113. [5] Ibid, 113. [6] Ibid, 114. [7] Ibid, 120. [8] Ibid, 116 [9] Rebecca B. Brooks, The Daughters of Liberty: Who Were They and What Did They Do? History of Massachusetts, 2017. [10] Carol Berkin, Women as Major Participants in the Revolutionary War, 2014. [11] Lauren M. Eleuteri, Patriots in the kitchen: The role of Republican motherhood in Jeffersonian America. Retrieved from http://elonuniversity.contentdm.oclc.org/cdm/ref/collection/p15446coll2/id/36. [12] Patriots in the kitchen: The role of Republican motherhood in Jeffersonian America. [13] Ibid. [14] Tho’ husbands are tyrants, their wives will be free. New York Journal, 1770.

0 Comments

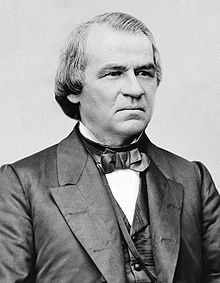

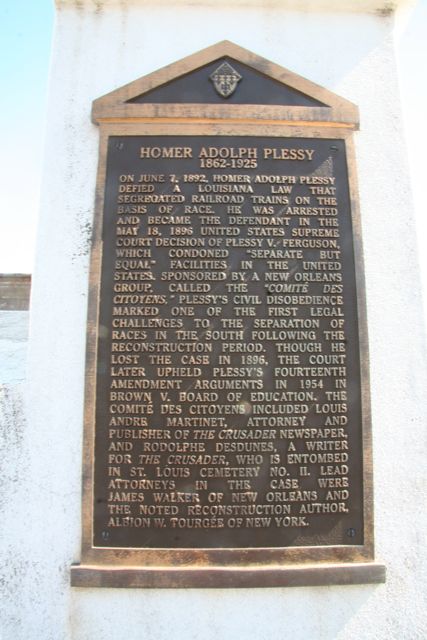

Words by Anthony Ruggiero At the end of the Civil War, throughout the Reconstruction Era and onwards, there existed - and still exist - unresolved racial issues. In the subsequent Reconstruction era, distribution of land within the southern states was ineffectively handled, with both black and white southerners being forced to share, resulting in black citizens becoming financially dependent on wealthier merchants. Furthermore, an increasing racial divide surfaced as a result of the Jim Crow laws as well as other policies and groups, halting the economic, societal, and political progress of black citizens. Although in today’s society some progress has been made, there still exist lurking racial issues that are legacies of the Civil War. President Andrew Johnson One of the issues that went unresolved at the end of the Civil War was land redistribution and former slaves’ inability to support themselves economically. Although former slaves were promised land within the South at the end of the Civil War, President Andrew Johnson’s 1865 federal order returned land to the old plantation owners. Many African Americans therefore had to sharecrop the land, which often led to long leases and other problems. For example, black farmers were unable to acquire the equipment needed to work their farms independently.[1] Thus, farmers were forced to use their crops to pay off white southern merchants in order to gain a loan to pay for the necessary supplies. This system effectively ‘bound the farmer to the merchant and restricted his options to buy elsewhere or dispose of his crop in the most advantageous manner.’[2] In order to pay back the loan, farmers focused on growing a cash crop such as cotton. However, farmers would often neglect growing crops for food production, thus forcing them to borrow more money from the merchants to feed their families.[3] Another unresolved issue was protecting the rights of black citizens throughout reconstruction. Although former slaves were formally recognize as citizens and could vote under the Constitution, these ‘new’ citizens faced further obstacles in the form of the Jim Crow laws, and subsequent “black codes.”[4] Implemented within the south, these laws highlighted the racial divide between white and black citizens, by mandating separate sections within restaurants, prohibiting black mobility in businesses, and literacy exams in order to vote.[5] Furthermore, black citizens were singled out by law enforcement, due to the existing racial stereotypes describing black citizens as unlawful, and placed within the convict lease system. This meant that black citizens were forced to work, under harsh conditions and were subject to beatings by prison guards, in areas such as mining.[6] This system was called ‘convict leasing’. Prisons were allowed to do this under a clause within the Thirteenth Amendment which stated, "neither slavery nor involuntary servitude, except as a punishment for crime whereof the party shall have been duly convicted, shall exist within the United States, or any place subject to their jurisdiction."[7], thus effectively placing them back into a system of slavery. Furthermore, this time period emphasized the doctrine of “separate, but equal”. For example, the 1896 Supreme Court case, Plessy v. Ferguson, highlighted this issue when the United Sates Supreme Court ruled in favor of the New Orleans Committee of Citizens, once Homer Plessy had sued after he had been arrested for not sitting in a segregated car. This era was marked by its de jure racial segregation policies[8]. In addition, anti-black groups, such as the Ku Klux Klan, would murder and torture black citizens.[9] Deadwildcat at en.wikipedia [CC BY-SA (https://creativecommons.org/licenses/by-sa/3.0)] In today’s society, there still exists a racial divide within US society . Although there has been progress after the height of the civil rights movement of the 1960s regarding blatant racial segregation, there still remains a gap between white and black citizens across a number of areas. For example, the black middle class has grown only to approximately ten percent of all black households; the unemployment rate remains twice that of whites, with no real push to resolve this issue. [10]Furthermore, a racial divide still remains within the United States prison system. According to research made by Ohio State University Professor, Michelle Alexander, “there are more blacks in the correctional system today in prison or jail, on probation or parole than in slavery in 1850”[11]. In addition, black citizens are more likely to be singled out, due to racial profiling, as being more likely to commit crimes than their white counterparts.[12] Furthermore, ‘convict leasing’ is also a reoccurring issue within the prison system. In 2016, 24,000 prisoners within 29 prisons in 12 states protested against inhumane conditions[13]. In 1979, The Federal Bureau of Prisons, backed by the United States Congress, created a program called the Federal Prison Industries (UNICOR) in order to tackle the downturn of inmates, and thus the lack of revenue[14]. The program paid inmates under one dollar an hour. States, such as Virginia and Oklahoma, targeted and utilized specifically African Americans and Latinos within the program[15]. Overall, the program generated an estimated $500 million in sales, the profits of which were not shared with the workers[16]. As a result, prisoners staged a coup against the system in the form of a strike. Despite this large-scale strike, this system is still utilized through congressional loopholes, thus promoting a new form of slavery. While the Civil War accomplished the reuniting of two parts of the nation, unresolved issues resulted in a division between its citizens. The United States government’s ineffective strategy in land redistribution was detrimental to black citizens who further felt the economic hardships during Reconstruction. Furthermore, the racial tensions that were created thereafter greatly, and negatively, affected black citizens. As a result, many black US citizens feel that racial divide as keenly today in the twenty-first century as they did one hundred years ago.  Anthony Ruggiero is currently a High School History Teacher in New York City, New York. In addition to teaching, he has been published in several magazines, such as History Is Now, Historic-UK, Tudor Life, Discover Britain, and The Odd Historian. Anthony has also written for the Culture-Exchange blog, and The Freelance History Writer blog. His work can also be viewed on his Twitter handle: @Anthony10290122 [1] Douglas-Bower, Devon. Debt Slavery: The Forgotten History of Sharecropping I The Hampton Institute. November 7, 2013. http://www.hamptoninstitution.org/sharecropping.html.

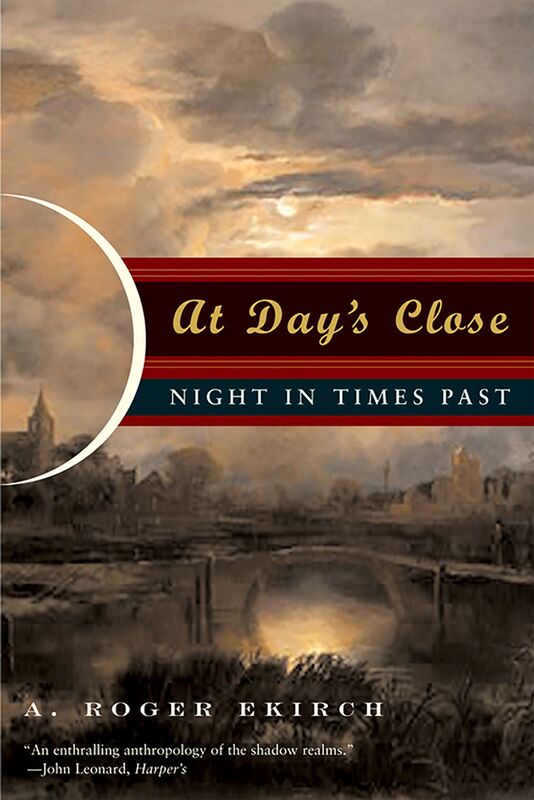

[2] Debt Slavery: The Forgotten History of Sharecropping. [3] Ibid. [4] Jim Crow (article) | Period 6: 1865-1898. Khan Academy. https://www.khanacademy.org/humanities/ap-us-history/period-6/apush-south-after-civil-war/a/jim-crow. [5] Jim Crow (article) | Period 6: 1865-1898. [6] Douglas-Bower, Devon. Slavery by Another Name: The Convict Lease System I The Hampton Institute. 2013. [7] Slavery by Another Name: The Convict Lease System. [8] Jim Crow (article) | Period 6: 1865-1898. [9] Ibid. [10] Oskin, Becky. Why US Still Needs a Civil Rights Movement. LiveScience. August 29, 2013. http://www.livescience.com/39291-america-still-needs-civil-rights.html. [11] Why US Still Needs a Civil Rights Movement. [12] Ibid. [13] Love, David A. Slavery in the US Prison System. Prisons | Al Jazeera, Al Jazeera, 2017. [14] Love, Slavery in the US Prison System. [15] Ibid. [16] Ibid. If you are waking up right now, chances are you have had, what clinicians term, one period of consolidated sleep. That is, you went to bed yesterday evening and awoke the following day - this morning. In most parts of the world - particularly the industrialised world - consolidated sleep is the norm. By and large, our patterns of sleep and wakefulness (known as our circadian rhythm) are governed by two things: the cycle of nighttime and daytime; the daily demands on our time. The former is mandated by the movements of the planets (not much we can do about that), the latter is determined by a multitude of factors, perhaps the most significant being the daily work that we have to do. In a sense, for the vast majority of people, we don’t have much control over this either. The Industrial Revolution and state-sponsored education have tended to organise the daily routines of most people around the world, meaning that - exceptions notwithstanding - we carry out our ‘work’ during one continuous block during the day and sleep in one continuous block at night (if we are lucky). It was not always the case. In fact, until relatively recently your ancestors would most probably have maintained a bi-modal sleeping pattern. They had two sleeps a night. In At Day’s Close: Night in Times Past, Roger Ekirch’s revelatory investigation into the social history of what people around the world do at night, a rich and varied trove of evidence from across the centuries and the globe, suggested that we had a ‘first sleep’ and then a ‘second sleep’. The first sleep began a couple of hours after the sun went down and lasted for 3-4 hours. After an hour or so of wakefulness (more on this in a moment), people went back to sleep for another 3-4 hours. During this period of wakefulness between the first and second sleeps, evidence abounds that people did either what was necessary - chopped wood, prepared food, prayed and such - or what was enjoyable - read, talked with bedfellows, had sex and so on. According to Ekirch, the surprising point in his discoveries is not that this practice seems to have been almost entirely forgotten in the post-industrialised world, but that it was such a commonality - references abounding in plain sight - in the first place. In England, prior to, and even during, the early phases of the Industrial Revolution, records of first and second sleeps are abundant and exist without any attention being drawn to their being in any way unusual. That’s because, according to Ekirch, they weren’t. In 1840, Charles Dickens dropped a ‘casual’ mention into Barnaby Rudge, “He knew this, even in the horror with which he started from his first sleep, and threw up the window…” Ekirch opens his 2001 paper, Sleep We Have Lost: Pre-industrial Slumber in the British Isles, (out of which his book, At Day’s Close: Night in Times Past was developed), with journal notes made by the Scottish novelist Robert Louis Stevenson. These notes, written about his time spent camping and hiking through the Cévennes in France in 1878, describe with great poetry what he calls “the stirring hour” and “...this nightly resurrection” - that interval between sleeps which, even by Stevenson’s time, was largely a thing forgotten to history. It is a poignant opening to a piece of research that ‘rediscovered’ a practice which, to your ancestors, would almost certainly have been the norm. Robert Louis Stevenson takes his 'first sleep' in Cevannes WHY did we sleep twice? There have been suggestions that bi-phasal sleep became lodged in western Europe because of the requirements of early Christianity - monkish orders rising during the darkened hours to recite verses and pray - and that this embedded itself in European Catholicism which, in turn, promoted the same such nightly devotions from all strata of society. According to Ekirch, this can’t be the root of segmented sleep, because the very practice not only predates early Christianity, it existed across the world in areas which were, until relatively recently, untouched by Christian missionaries and religious conquistadors. Just why we took two sleeps at night is a complex question, linked perhaps to the dangers our ancestors (and not even to distant ones at that) faced by sleeping through the night - from predators to criminals. Night could be, it must be remembered, a terrifying time for all, dense as it might have been with frightful superstitions, religious paranoia, and the very real criminals that moved unseen. The night time was literally and metaphorically a time when the disreputable elements of life emerged: “Associations with night before the 17th Century were not good," he says. The night was a place populated by people of disrepute - criminals, prostitutes and drunks.” (Kolovsky, Craig.) Breaking sleep, as unnatural as it may seem to us now, could be fundamental to the survival of our ancestors. Sleep was a much more vulnerable state for our ancestors to have to endure than for most of us today. Unless the moon was visible at night, the darkness of the pre-industrial world would have been absolute. When people actually took to their beds for their first sleep was, in part, also related to the darkness because, as Professor Ekirch plainly stated in Segmented Sleep in Preindustrial Societies:

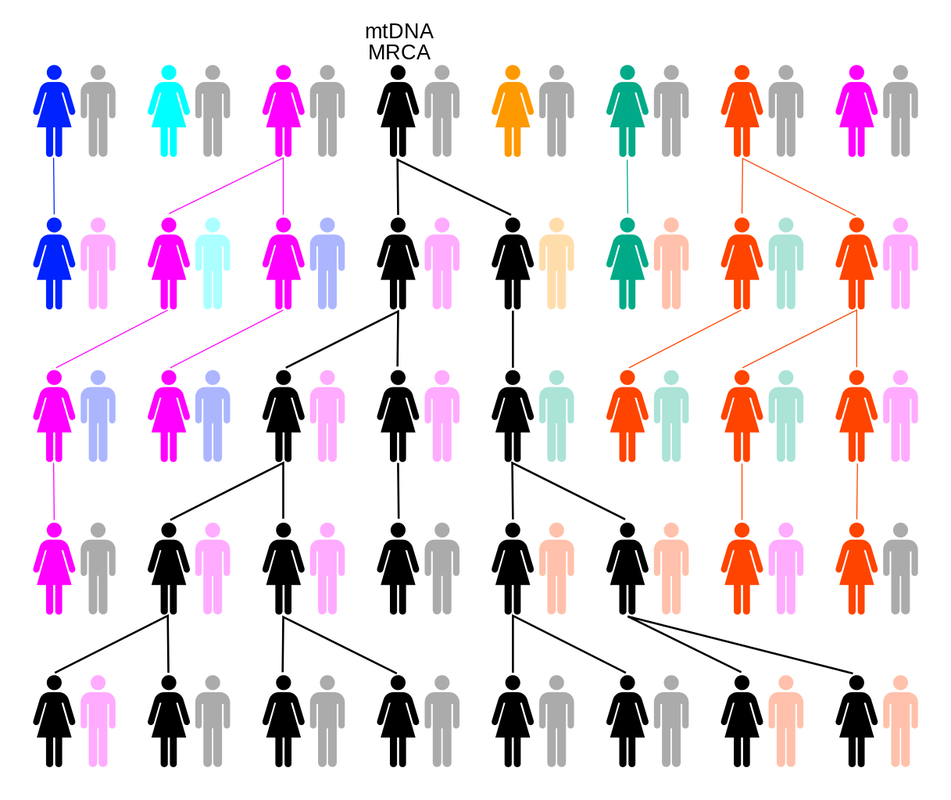

“As in many preindustrial cultures, sleep onset depended less on a fixed timetable than on the existence of things to do.” Since pre industrial England relied so heavily on the daylight to accomplish these ‘things to do’ - our daily toils - once the night arrived it would have been near-impossible to conduct anything except the least arduous of tasks, such as talking, eating, or sleeping. If the work had been accomplished for the day - in the hours of light during which it was possible to accomplish it - then the timing of the ‘first sleep’ need not have been rigid. However, it cannot be overstated just how utterly devoid of light the night could become in a world before the Industrial Revolution. Unless the moon rendered otherwise, darkness would have been near absolute for anyone who could not afford artificial light - candles, oil lamps and such. And this is where the answer to the question of why we no longer have two sleeps at night is to be found: artificial light. Perhaps the more important question isn’t really why we used to have two sleeps and a ‘break’ but why we stopped the practice. The wealthier classes were perhaps the first to move away from the prehistoric ‘two sleep’ pattern because of their earlier access to artificial light - candles, oil lamps, wood fires etc. The Industrial Revolution forced the proliferation of light sources and the brightness of those sources across the British Isles. Gas lights - never really ubiquitous outside of major urban centres - nonetheless opened up the night to those who had hitherto retreated from it. Once the electric lightbulb, with its powerful luminescence and lower cost, changed the British night from dark to light, the practice of two sleeps quickly faded into obscurity. No more was it necessary to stop work when the sun went below the horizon; no longer was it necessary to fear the night and barricade oneself behind locked doors. In short, people began experiencing the night (for both work and leisure) and sleeping later - to the point where the first sleep became the only sleep. In a very short period of time indeed - perhaps as little as one hundred and fifty years - your ancestors went from a nocturnal pattern of sleep that had endured for millennia whereby we slept and then woke and then slept again, to a consolidated sleep determined primarily by the growth of artificial light. Your ancestors from almost certainly followed the practice of two sleeps. What is perhaps equally important is that, since England was the first country to industrialise, they were also probably the first to abandon the practice. Dr. Elliott L. Watson (Co-Editor of Versus History) @thelibrarian6 Bibliography Ekirch, A. Roger. Segmented Sleep in Preindustrial Societies. (http://www.history.vt.edu/Ekirch/sleep_39_03_715.pdf ) Ekirch, A. Roger. Sleep We Have Lost: Post-industrial Slumber in the British Isles (https://pdfs.semanticscholar.org/5f7d/10e30df23c62edc499ff26e909764e8946ad.pdf?_ga=2.82364371.494877159.1561369868-1007184158.1561369868) Koslofsky, Craig. Evening's Empire: A History of the Night in Early Modern Europe (Cambridge and New York Cambridge University Press 2011) In short, yes. No matter whether you currently reside in the Americas, Europe, Africa, Australia, or Asia, you are related to royalty. It is a mathematical certainty. You are the product, in a biological and historical sense, of an unbroken line of procreation and survival, dating back in time at least as long as Homo Hominids have existed and, most probably, much, much further back than even that. Your ancestors survived for hundreds of thousands, perhaps millions, of years, so you could enjoy that pizza and this article. It makes sense that, particularly with the rise of genealogy websites and DNA ancestry services, people around the world are increasingly asking one particular question and, perhaps more importantly, able to have this question answered. The question is this: “Am I related to royalty?”. Regardless of the rationale driving this question - whether it be narcissism or practicality (blue blood might make you feel important and it could be very profitable in terms of inheritance rights), the question is a legitimate one - our current identity is bound so intricately (and often messily) with those who carried our blood through the millennia. We all come from somewhere. Where is that somewhere? There is also a romance to the uncovering of an ancestry either steeped in, or merely anointed by, royal blood: taking part in the achievements of our predecessors - bringing pride or infamy - can become, rightly or wrongly, part of our very own myth-making as individuals. When was the last time you told someone proudly of the achievements of your own children? Imagine if you could tell those same people that you were related to the man who overthrew King Richard III of England or the Iceni Queen who punished the Romans so defiantly. Confirmation of existence? Perhaps. Bragging rights? Most certainly. To get back to the question: “Am I descended from Royalty?”. The popular geneticist, Adam Rutherford, in his many works, including A Brief History of Everyone Who Ever Lived, explains the irrefutable mathematics of the situation: there have been ‘only’ about 108 billion Homo sapiens. Taking as his starting point the emergence of what we may call our direct human ancestors (hominids very much like us) 50,000 years ago, Rutherford addresses a common misconception about ancestry: it does not resemble a tree, inasmuch as a tree has separate and distinct branches that extend, in a linear fashion, outwards and away from the trunk. Tree branches may have smaller branches extending outwards from them, but they are always linear - they never rejoin another branch. They exist in isolation from every other branch except the one from which they sprout. This is an impossible scenario, genealogically speaking. As we are all born of two parents*, then for the ‘family tree’ metaphor to be in any way accurate, we would expect every generation that is born to necessarily take itself one branch further away from everybody else (including the original parents and their relatives), genetically and relationally speaking - into isolation. Metaphorically, the branches should only get further and further away from the trunk of the tree, and can only stretch in two directions - backwards and forwards. Some ‘simple’ addition tells us this can’t be true. Take the following as true: You have two parents; your parents each had two parents; their parents each had two parents and so on. Add these together and go back as far as you can. 2+4+8+16+32+64 etc. If you do this and go back a ‘mere’ thousand years, the number you arrive at will be over 1 trillion. That’s 1 trillion people in your direct family tree alone. That number exceeds the sum of all humans that have ever existed - by some way. To extrapolate even further, if there are 8 billion people currently on the planet and they each have 1 trillion people in their own linear family trees, then within the last thousand years there have lived and died more than 8 sextillion humans. That’s 8 followed by 21 zeros. In only the last one thousand years. What if we went back 50,000 years - to the point in time that Rutherford and others see the emergence of Homo sapiens that could be said to correspond, in a behavioural sense, to us today? The mind boggles. And it should. So, what’s happening here and how does this relate to the question in the title? Genealogy should resemble a tangled net rather than a 'neat' tree. You see, the numbers of ancestors that we have increases exponentially as opposed to linearly; it's the difference between calculating 2+4+8+16... as opposed to 1+1+1+1… What this means, and what Rutherford, Mathematicians such as Joseph T. Chang, and Computer Scientists such as Mark Humphrys, understand is that ancestral lineage is not linear at all, it is a complicated mesh of overlapping, shared, repeated, and interconnected relationships. In short, every person on this planet is related, in a genealogical sense, to everybody else. More than this - and this is where things get particularly interesting - we all share common ancestors. Even more than this, we all share the same common ancestor/s! In order that we understand this (and do so in as simple a manner as possible) let’s go back to Africa. The most recent ‘out of Africa’ hypothesis - that all living humans are descended from Homo sapiens who emerged from, and then left, Africa between 200-300,000 years ago - rules out parallel evolution (humans evolving in different geographical locations at the same time). What this means is that, if there is only one geographical and evolutionary source for Homo sapiens, then we must, out of necessity, all be related to that source. One of the first significant elements of the human genome that was sequenced was mitochondrial DNA. Not wanting to get unnecessarily complex, mitochondrial DNA is almost exclusively inherited by children from their mothers. Combine this with the understanding of our geo-evolutionary origin (Africa) then it is an absolute certainty that every living person alive today can trace, through their mitochondrial DNA, their genealogy all the way back to a single female ancestor. We can do something similar with Y-chromosome DNA, which is passed almost unchanged from father to son. And so, in the public imagination we are all descended by an unbroken series of genetic links back to a single woman and a single man: the so-called ‘Mitochondrial Eve’ and ‘Y-Chromosomal Adam’. Mitochondrial DNA can be traced through your female ancesters. Counter-intuitively, these two individuals are unlikely to have been the first humans - they are ‘merely’ the MRCA, or Most Recent Common Ancestor, of all humans alive today. Other humans - probably millions - lived and died without leaving children to contribute to the lineage that would mesh with ours. What is perhaps even more astonishing than this is that, because of the interconnectedness of our species - helped in part by our migrations from village to village, town to town, country to country, and continent to continent - the MRCA of all people living today, probably lived just a few thousand years ago! Now that we have established our messy interconnectedness as Homo sapiens, let’s get back to royalty. Some readers may feel a little cheated by what has just been read above - the rather impersonal and blanket claim that ‘we are all related’. “Where’s the romance in that?”, one might ask. Well, let us get more specific. Using the statistical models of people such as Joseph T. Chang, we can circumvent any feeling that our general genealogical relationship to others is somehow boring, and narrow down our ancestral links to more, let us say, exciting specifics. For example, if we disregard only very recent immigration into Europe, the MRCA of all Europeans is either a man or woman who lived in the very recent past - about 600 years ago. Adam Rutherford goes one step further, demonstrating that almost every single European - and therefore anyone of European descent living in the New World - has royalty in their blood. Specifically, they have the Y-chromosome DNA of Charlemagne - Charles the Great - and/or the mitochondria of four out of his five wives. Charlemagne It is crucial to make note of something - and this is where we depart from statistics and move into history - the number of human beings currently alive that have, to any discoverable degree, documented ancestry, is miniscule. Unless your ancestors enjoyed wealth or significant historical impact, chances are there will be little to no evidence of their existence on this planet. Before the invention of the printing press by Johannes Gutenburg in the 1440’s, the world’s documentation was handwritten, some of which could be painstaking, most of which would be costly. Until the moveable-type printing press proliferated around the world, the fact of the matter was this: regardless of where around the world you lived and died, unless your life was viewed as worthy of documentation by someone with the means and the inclination to do so, it was likely to be forever ignored by posterity. Occasionally, bureaucracy would fortuitously record the lives of common people. For example, the Domesday Book - commissioned by William the Conqueror after his defeat of Harold of Wessex at the Battle of Hastings in 1066 and completed in 1086 - provides historians with a wonderful trove of social history. The ‘Great Survey’ of the people of England and Wales was undertaken for the purposes of taxation - William needed to assert his royal pecuniary rights over the people of his newly conquered lands and, in order to do so, needed to know the levels of wealth and property of all the people occupying these lands. Consequently, though the motive of William of Normandy was to exert his royal control, one important outcome of the Domesday Book was detailed proof of the existence of people who would otherwise have disappeared from the historical record. However, such serendipitous recording of the existence of ‘common people’ was the exception rather than the rule. In most parts of the world, well beyond the invention of the printing press or, indeed, the advent of the Industrial Revolution, the historical record was primarily oral. Unfortunately, oral history often hits the same roadblocks as written history - it mostly records the lives of only those deemed ‘worthy’ of the effort. In a very roundabout way what this means is that, depending on the geographical origins of your antecedents, the socio-economic route by which your ancestors travelled (by and large, an impoverished one), and whether or not they did something worth noting, chances are there is no evidence whatsoever of their existence. Except for you. You are the evidence of their existence. You may be the only evidence. And this brings us back to statistical models…

Since there may be little to no evidence of your genealogical history beyond your most immediate ancestors, we must take refuge in statistics. Ignoring Benjamin Disraeli’s supposed claim that “There are three kinds of lies: lies, damned lies, and statistics”, in this case the mathematics is irrefutable. If you are of European ancestry you are related to royalty. In fact, if you currently reside in the Americas - including the Caribbean and Bermuda - you are, to a high degree of certainty, related to English royalty specifically: “For example, almost everyone in the New World must be descended from English royalty—even people of predominantly African or Native American ancestry, because of the long history of intermarriage in the Americas. Similarly, everyone of European ancestry must descend from Muhammad.” (Chang) To continue, if you live in North America, whether your ancestors left from Plymouth Harbour on the Mayflower in 1620 or fled from Ireland during the potato famine of the mid nineteenth century, they took with them the DNA of royalty. Whether your ancestors were brutally enslaved and transported to the Americas in chains or were native to the land colonised by Europeans, you are related to royalty - whether it be from the royal houses of England, Europe, Africa, Asia or the Americas. It is a statistical certainty. Dr. Elliott L. Watson (Co-Editor of Versus History) @thelibrarian6 Bibliography: Chang, Joseph T. Recent Common Ancestors of all Present-Day Individuals, (Yale University 1999) http://www.stat.yale.edu/~jtc5/papers/CommonAncestors/AAP_99_CommonAncestors_paper.pdf Olson, S. The Royal We, The Atlantic (May 2002) https://www.theatlantic.com/magazine/archive/2002/05/the-royal-we/302497/ Dr, David Silkenat joined the Versus History Podcast for Episode #80 to discuss the American Civil War. To celebrate, we would like to give away a gratis copy of David's new book, 'Raising the White Flag: How Surrender Defined the American Civil War', published by The University of North Carolina Press, to one lucky listener of the Podcast. The Amazon page for the book can be found here. The question itself was revealed by Dr Silkenat on the Podcast itself, so all you need to do is download it here and listen! Good luck! 1) Probably the most iconic image of American Independence ever … features loads of British flags in the background!The famous painting entitled ‘Declaration of Independence’ was by the American artist John Trumbull. This image - which featured on the $2 Dollar Bill - was not actually commissioned until 1817, some 41 years after the actual ‘Declaration of Independence’ came into effect. Incidentally, the painting does not depict the ‘declaration’ element of 4 July 1776 at all. Rather, it shows the presentation of the draft document to the Continental Congress for consideration prior to signing. Here is another surprise: if one looks at the wall behind the draftees, a collection of British flags is visible, including the King’s Colours - the forerunner of the Union Jack - the very flag that represented the Monarch (George III) that the signatories were declaring independence from. 2) 8 of the 56 SIGNATORIES were actually born in Britain. 2 were born in England!It might come as something of a surprise to learn that many of the signatories of the Declaration of Independence had actually celebrated their British connections at one time or another prior to renouncing them. George Washington, for instance, had fought for Britain against the French and their Native American allies during the Seven-Years’ War / French and Indian War 1756-1763, donning the famous British Redcoat. However, perhaps even more surprisingly, two of the signatories were actually born in England, with 8 in total being born in the British Isles. Robert Morris, who is considered to be one of the architects of the American financial system, was born 3000 miles away in Liverpool. Moreover, Button Gwinnett, the second signatory of the Declaration of Independence, was born in the quiet village of Down Hatherley in Gloucestershire, in 1735. 3) The Declaration of Independence cites the actions OF THE BRITISH KING as the reason for the political separation.The most well-known part of the Declaration of Independence is the preamble, with the famous line ‘We hold these Truths to be self-evident, that all Men are created equal, that they are endowed by their Creator with certain unalienable Rights, that among these are Life, Liberty and the pursuit of Happiness …’ which many will have heard recited or quoted. However, the lesser-known, but crucially important second part of the Declaration of Independence includes a long list of grievances against the British King, which would have been longer still if all of Thomas Jefferson’s original recommendations had been upheld. In the minds of the signatories, the list provides evidence for the separation between America and Britain. In total, eighteen of these begin with the word ‘He’, referring squarely and solely to the supposed ills committed by the British King, George III. These grievances include - but are not limited to - King George III’s refusal to pass necessary laws, stationing a large number of British troops in the colonies, obstructing justice, imposing taxes without consent and cutting off trade. Therefore, much of the content of the American Declaration of Independence is actually focused on the alleged tyrannical actions of the British sovereign.

I hope that you found that interesting and thanks for reading. You can check out our Podcast on the causes of American Independence here. Plus, the Podcast on why Britain lost the war that raged until 1783 here. To finish, here is the Podcast with my quick overview on why King George III deserves a more positive appraisal. Happy July 4 and Independence Day to each and all! Patrick @historychappy Co-Editor Trade between Great Britain and America is currently an important political and economic issue. When President Trump visited the UK in June 2019, preliminary discussions about a potential 'Post-Brexit' trade deal between the two nations was headline news. However, looking back, trade between Britain and America has always been important. The trade in tea, for instance, was at the epicentre of the Boston Tea Party in 1773, when American Patriots threw tea on which they would have to pay British taxes into Boston Harbour in defiance. Move forward 138 years and by 1914, Britain had more money invested in the USA than it did in Australia and Canada - two of its own Dominions - combined. It may come as something of a surprise to learn that the trade in hats between Britain and America was particularly important in the 18th century. So, did hats really help to cause the American Revolution? Well, just maybe. Here's how ... The origins of the American Revolution 1775-1783 are often traced back to the end of the Seven-Years' War in 1763. Britain had defeated France and Spain, but in the process incurred a significant national debt which would need to be serviced by extracting taxation from her American colonies. There is truth in this. The mantra of the patriot group ‘Sons of Liberty’ was ‘No taxation without representation’, which alludes to the causal centrality of the issue of taxation implemented by the British Parliament without American colonists being returned to Westminster as MPs. In addition, the 'King George Proclamation' of 1763 forbade British settlement west of the Appalachian Mountain range on the American continent, in an attempt to minimise the prospect of further costly wars involving the British Army. This served to limit the Colonists' thirst for territorial expansion, the physical barrier to which was now the British Redcoats supposedly there to protect them, rather than the French or Native Americans. Others have traced the roots of the American Revolution back to the very nature of the British settlers themselves. The early colonists took with them the concept of the ‘Freeborn Englishman’, imbued with the inviolable rights handed down from Magna Carta 1215 and the Bill of Rights 1689, which protected them from absolute tyranny and despotism. To this end, historian Piers Brendon has argued: “The Empire carried within it from birth an ideological bacillus that would prove fatal. This was Edmund Burke’s paternalistic doctrine that colonial government was a trust. It was to be so exercised for the benefit of subject people that they would eventually attain their birthright - freedom.” Clearly, this interpretation indicates that the concept of the ‘Freeborn Englishman’ was an underlying causal factor in precipitating revolution, as the British settlers were inherently resistant to ongoing interference from a British Monarch 3000 miles across the Atlantic Ocean. There were, however, events that occurred between the establishment of Jamestown in 1607 and the end of the Seven-Years War in 1763 that served to undermine the relationship between the British colonists in America and the Mother Country. One such event was the passage of the little-known ‘Hat Act’ through Parliament in 1732. In essence, this forbade the colonists in America from exporting hats. Instead, they were obliged to import them from Britain. Moreover, it limited the number of apprentices that could be employed by hatmakers in the colonies, thus starving the colonial hat manufacturers of inductees into the profession. In an era where one’s social status was symbolised by apparel, this left the colonists with little choice but to pay the increased prices charged by British milliners for tri-corner hats and mobcaps. Indeed, some colonists suspected that British tailors were exploiting their monopoly position and deliberately sending antiquated garments and ‘seconds’ to the colonies. Whatever the truth, Thomas Jefferson made clear his objection to the Hat Act on the eve of the American Revolution in 1774: By an act passed in the 5th Year of the reign of his late Majesty King George II, an American subject is forbidden to make a hat for himself of the fur which he has taken perhaps on his own soil; an instance of despotism to which no parallel can be produced in the most arbitrary ages of British history. Perhaps Jefferson’s grievance against the Hat Act was genuine. Perhaps it was merely harnessed to help to fan the flames of anti-British sentiment amongst the Colonials on the eve of the Revolution. Perhaps both. Whatever the truth, the 1732 Hat Act demonstrates that the legislative origins of the American Revolution pre-date 1763 and the end of the Seven Years' War. Patrick @historychappy

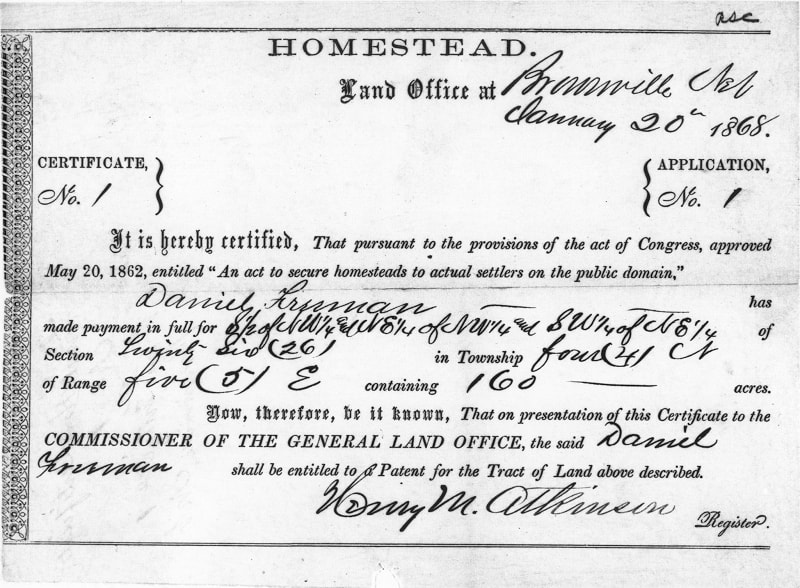

Co-Editor of Versus History ...THAT THE ‘WILD WEST’ ONLY LASTED FOR 30 YEARS. “Thus the advance of the frontier has meant a steady movement away from the influence of Europe, a steady growth of independence on American lines.” ― Frederick Jackson Turner, The Frontier in American History In his 2001 book, The American West: The Invention of a Myth, David Murdoch asserts that America is ‘exceptional’ inasmuch as it is the only country to have chosen its own self-image: "No other nation has taken a time and place from its past and produced a construct of the imagination equal to America's creation of the West". The ‘selection’ of a particular place and time in history to use in the assembly of a symbolic self-image, if true, is a remarkable piece of history-making. The ‘Wild West’, or the ‘Old West’, is so dense with historical imagery and meaning that a single mention of either of these two terms immediately conjures in the mind, gunslingers, sheriffs, wagon trails, gold rushes, saloons, western expansion, the frontier, Davy Crockett, grit and American determination, among countless other imaginings. But did you know that this foundational imagery (imagined or otherwise) is rooted in a period of only thirty years after the American Civil War? That’s 1865 to 1895. Although westward expansion into the interior of the North American continent began in earnest with President Thomas Jefferson’s Louisiana Purchase in 1803 - for roughly $15 million in today’s money - the period of time upon which many Americans model their historical character came after the Civil War (1861-1865). More specifically, the ‘Wild West’ comes after Abraham Lincoln signed into law the Homestead Act of 1862. This act, along with subsequent laws, essentially opened up what amounted to roughly 10% of the total area of the contiguous United States to anyone who had not taken up arms against the Federal Government. For free. 160 million square acres west of the Mississippi River were given to 1.6 million homesteaders who promised to build on and improve the land they were given - which was usually around 160 acres. Certificate of the first homestead according to the Homestead Act. Given to Daniel Freeman in Beatrice, Nebraska 1963 (Source: http://www.archives.gov/education/lessons/homestead-act/images/homestead-certificate.jpg |Date= 1868) Regardless of the reasons for the giving away of free land (perhaps a discussion for another blog post), give it away the Federal Government did. And people rushed westward to stake their claim. Millions of them. It is during this period of dynamic western movement and land-claiming (‘land rushes’ were more frequent than ‘gold rushes’) that the imagery of the ‘Wild West’ - historically accurate or otherwise - is forged. From gunslingers like Billy ‘the Kid’ and Jesse James, to the ‘lawless’ frontier towns with saloons, gambling dens, brothels and noble sheriffs such as Wyatt Earp, as well as the ‘taming’ of the American land through hard work and bloody conflict (Wounded Knee was in 1890), the symbolism of the American West was very much created in this period. William H. Bonney A.K.A. Billy 'the Kid' Although westward expansion, as a concept, ended in 1912 with the admittance of Arizona to the US as a contiguous land mass, according to Frederick Jackson Turner, the ‘frontier life’ that had so completely shaped the character of America, was at an end long before then: "...the frontier has gone, and with its going has closed the first period of American history." Frederick Jackson Turner, The Significance of the Frontier in American History Turner wrote this in 1893. In those three brief decades after the Civil War, the mythology of the ‘Wild West’ - that which is embedded so deeply in American popular and historical culture - was born. Westward Expansion in a GIF. Source: Vivid Maps Elliott L. Watson

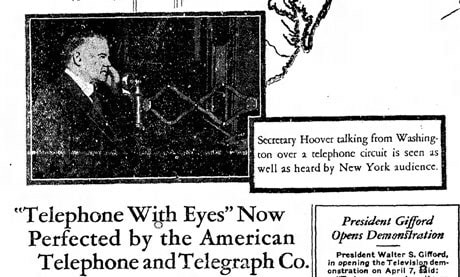

@thelibrarian6 ...THAT THE FIRST LONG DISTANCE TELEVISION BROADCAST IN AMERICAN HISTORY STARRED A SOON-TO-BE-PRESIDENT (It’s not who you think).At 3.25pm on April 7th, 1927, a group of journalists, Bell Laboratory executives, and AT&T President, Walter S. Gifford, listened to a voice transmitting from 200 miles away in Washington D.C.. Gathered in an auditorium in Manhattan, the attention of the audience was rapt, not by the voice - which was falling out of the loudspeaker - but to the grainy image that was moving in time with the voice. Walter S. Gifford, the President of AT&T, sitting in a leather-studded wooden chair that was raised about two feet from the ground, crouched over a standup rotary telephone that was itself perched on a shelf attached to a wardrobe-sized wooden box, and squinted into a small rectangle projecting at 45° from the same ‘wardrobe’. On the small rectangle, a picture of the man whose voice was spreading out from the loudspeaker, appeared. It also moved. At 18 frames a second, the monochrome picture, synchronised with the voice, created a staccato but recogniseable and moving form - that of Secretary of Commerce and future President of the United States - Herbert Hoover. Herbert Hoover appearing on America's first long distance television broadcast Referring to the demonstration, Hoover claimed, “Human genius has now destroyed the impediment of distance in a new respect, and in a manner hitherto unknown.” Despite Hoover’s enthusiasm, the front page of the New York Times proclaimed, the day after the display, that television’s “Commercial Use in Doubt”. If you are interested in watching a short video of the actual demonstration, follow this link: https://www.facebook.com/watch/?v=116047015077905 Dr. Elliott L. Watson @thelibrarian6 |

Categories

All

Archives

April 2024

|

RSS Feed

RSS Feed